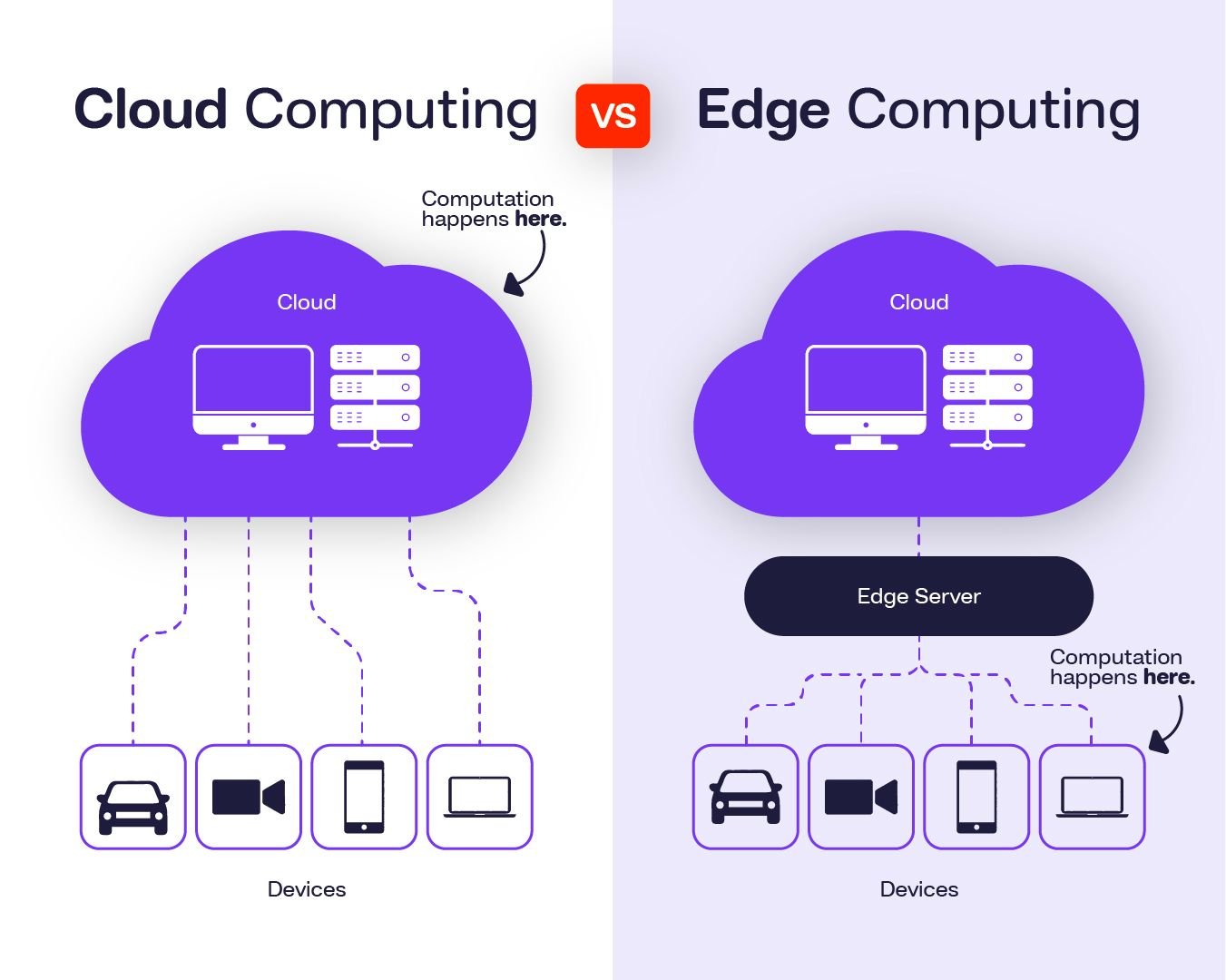

The architecture of how computers process information is undergoing a structural change. For most of the past decade, the dominant model was centralized: data generated by devices — smartphones, sensors, cameras, industrial machines — was sent over the internet to large data centers (the cloud), processed there, and results were sent back. This model works well for many applications but introduces an irreducible delay created by the physical distance data must travel and the time required to transmit, process, and return results. For applications that require instant response — autonomous vehicles, industrial robotics, remote medical procedures, augmented reality overlays, real-time threat detection — this delay, however small in absolute terms, becomes unacceptable. Edge computing moves the processing itself closer to where the data is generated, eliminating or dramatically reducing that round-trip time.

What Edge Computing Is

Edge computing is not a single product or technology but an architectural approach: placing computing resources — processors, memory, storage — at or near the source of data generation rather than routing everything to a central cloud. An edge computing node might be a specialized processor embedded in a factory machine, a server at a telecommunications tower, a processor unit inside a smart camera, or a ruggedized computer at a hospital that processes medical imaging locally. The defining characteristic is physical and network proximity to the data source.

The performance difference between edge and cloud processing is most significant for latency-sensitive applications. A cloud server located hundreds or thousands of kilometers away introduces network delays measured in tens to hundreds of milliseconds — small enough to be imperceptible for many tasks, but too large for an autonomous vehicle needing to respond to a road obstacle in real time, for a robotic surgery system requiring precision movement control, or for an industrial quality-control system that must detect defects on a high-speed production line. Edge processing can reduce effective latency to single-digit milliseconds.

Why It Matters in 2026

Several converging trends are making edge computing increasingly important. The proliferation of IoT devices — industrial sensors, smart city infrastructure, connected vehicles, wearable health monitors — is generating data volumes that would be expensive and slow to route entirely through centralized cloud infrastructure. The growth of AI inference applications — running trained AI models to analyze video streams, recognize speech, detect anomalies — creates demand for fast, local processing that cloud latency cannot accommodate. And the expansion of 5G networks, which provide higher bandwidth and lower latency than 4G, enables more capable edge computing nodes to be connected into broader networks.

In manufacturing, edge computing enables real-time quality inspection using computer vision — cameras and processors embedded in production lines that detect defects in milliseconds without sending video to a cloud server. In healthcare, edge processing allows medical imaging systems to analyze scans locally within hospital infrastructure, with results available in seconds rather than minutes, and with patient data remaining within the facility rather than traversing external networks. In retail, edge AI processes camera feeds locally to analyze customer behavior or inventory levels without requiring continuous cloud connectivity.

Edge vs. Cloud: Not a Competition

The relationship between edge computing and cloud computing is complementary rather than competitive. Data that requires immediate local action — a machine stopping when it detects an unsafe condition — is processed at the edge. Data that requires aggregation, historical analysis, model training, or long-term storage continues to flow to the cloud. The architecture is hybrid: edge handles time-sensitive, latency-critical processing; cloud handles scale, long-term data analysis, and applications where response time is not critical.

Major cloud providers including AWS, Microsoft Azure, and Google Cloud have all invested substantially in edge computing frameworks that extend their services to edge nodes, allowing developers to deploy the same code to both cloud and edge environments. This integration means that building edge computing applications does not require abandoning cloud infrastructure — it requires extending it.

For India specifically, edge computing holds particular relevance in the context of the country’s digital ambitions. Telemedicine platforms that require reliable, low-latency video connections for rural medical consultations, smart agriculture systems that need to process sensor data in real time in fields with intermittent connectivity, and manufacturing automation in India’s growing industrial corridors all represent applications where local processing capacity would deliver verifiable improvements over cloud-dependent architectures. As 5G infrastructure expands and the cost of edge compute hardware continues to decline, the practical accessibility of edge deployment is increasing across sectors.