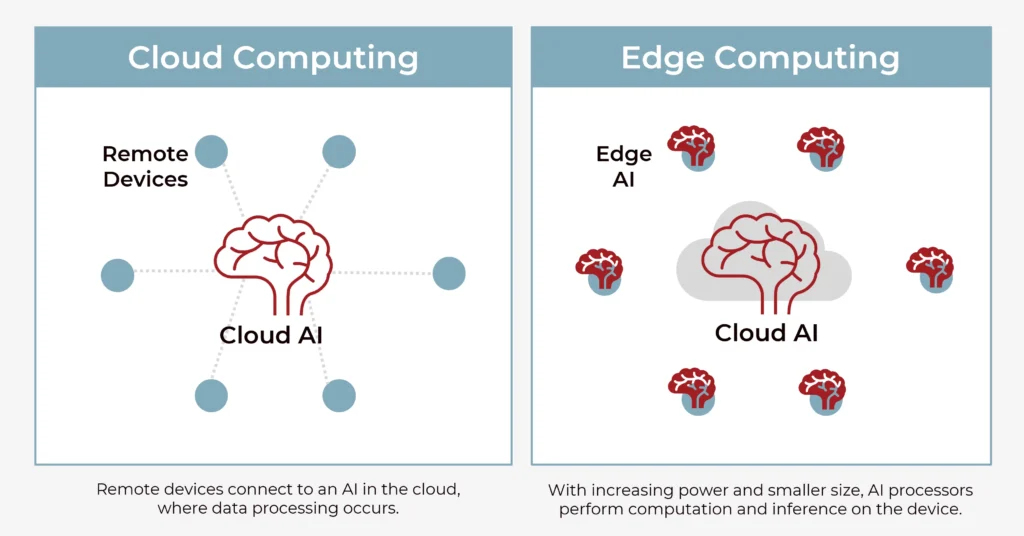

The shift of AI computation from centralized cloud servers to local device hardware — called Edge AI — is one of the most consequential infrastructure transitions in the current technology cycle. Understanding why it is happening, what it enables, and where it is already deployed requires unpacking both the technical drivers and the practical applications that have made local AI processing not just possible but commercially preferred for specific categories of applications.

The fundamental driver is latency. Processing an AI inference request on a cloud server requires the input to travel from the device to a remote data center, be processed, and the result transmitted back. For many applications this round trip is acceptable. For applications requiring real-time response — voice assistants that feel instantaneous, autonomous vehicle systems that must respond in milliseconds, augmented reality overlays that must update with head movement — cloud latency introduces delays that degrade the experience or create safety risks.

The second driver is privacy. Sending voice recordings, camera images, or biometric data to a cloud server for processing means that data leaves the device and transits networks under conditions the user cannot fully control. Processing that data locally means it never leaves the device — a privacy property with both individual value and regulatory compliance implications under frameworks like GDPR and India’s DPDP Act.

The third driver is connectivity independence. A cloud-dependent AI application fails when network connectivity is unavailable or degraded. Edge AI applications function in remote environments, on aircraft, in basements, and in rural settings where reliable broadband is absent.

On the device side, the hardware enabling Edge AI is the Neural Processing Unit — a dedicated processor architecture optimized for the matrix multiplication operations that underlie AI inference. Apple’s Neural Engine, first introduced in 2017 and now present in every iPhone and Mac, can perform tens of trillions of operations per second for AI tasks while consuming a fraction of the power required for equivalent GPU-based processing. Qualcomm’s Snapdragon X series, used in Windows laptops, includes an NPU capable of 45 TOPS (trillion operations per second). The proliferation of capable NPUs across consumer devices has made on-device AI inference fast, power-efficient, and available at scale.

The practical applications already running at the edge include: voice assistants (Siri, Google Assistant) that perform wake-word detection locally and increasingly handle complete queries locally; camera systems that apply AI scene recognition, computational photography, and video processing on-device; security cameras that detect specific events locally without streaming continuous video to the cloud; smartphone features like on-device translation, real-time audio transcription, and AI photo editing; and industrial IoT sensors that classify equipment anomalies at the sensor rather than transmitting raw data streams to central servers.

Apple Intelligence, announced in 2024 and expanded in 2025 and 2026, represents the most visible consumer implementation of Edge AI — a suite of AI features including writing tools, photo processing, Siri enhancements, and context-aware suggestions that run primarily on-device, using the Neural Engine in iPhone, iPad, and Mac hardware. The company’s explicit positioning of on-device processing as a privacy differentiator — and its Private Cloud Compute architecture for handling queries that exceed local capacity — reflect the degree to which Edge AI has become a competitive product dimension, not merely a technical optimization.