Medicine has historically been a reactive profession. A patient develops symptoms, seeks care, receives a diagnosis, and begins treatment. The entire system — hospitals, diagnostic laboratories, pharmaceutical pipelines, insurance structures — is built around this reactive model. Artificial intelligence is creating the conditions for a fundamental shift: a healthcare system that predicts disease before symptoms develop, identifies risk in populations that appear clinically normal, and intervenes years earlier than traditional medicine permits. The tools making this possible are real, deployed at scale in several countries, and producing verified clinical outcomes. They also carry unresolved challenges in bias, accountability, and data governance that no amount of optimism should be allowed to obscure.

The Scale of the Transformation

By 2026, the global AI-in-healthcare market is projected to exceed $200 billion, growing at an annual rate of over 35%. The transformation is already visible in radiology, oncology, cardiology, and neurology — fields where early detection saves lives.

A 2025 AMA survey found that 66% of physicians are already using health-AI tools — up from 38% in 2023 — and 68% believe AI positively contributes to patient care in some way. The adoption curve is accelerating, driven by a combination of demonstrated clinical value, workforce shortages, and the growing availability of electronic health record data that AI systems can analyze.

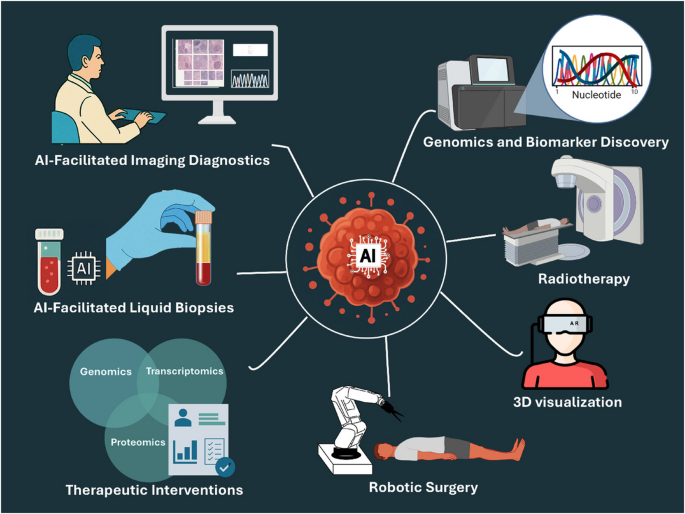

Cancer Detection: Where AI Has Had the Greatest Impact

The highest-profile application of AI in early disease detection is radiology — specifically the analysis of medical imaging to identify early-stage cancers that human radiologists might miss or identify later. A deep learning system trained on extensive annotated imaging datasets achieved a diagnostic accuracy rate of 94% in detecting lung nodules, significantly outperforming human radiologists who scored 65% accuracy in the same task. The implementation relieved radiologists of routine screening tasks, enabling them to dedicate more time to interpreting complex cases requiring nuanced clinical judgment.

AI can detect early signs of breast or lung cancer from imaging scans with accuracy exceeding 95% in controlled studies. In cardiology, algorithms predict heart attack risks years in advance by analyzing blood tests and ECG data. Neurological disorders like Alzheimer’s can now be identified up to a decade before symptoms manifest, allowing preventive therapies to start earlier. These figures are drawn from peer-reviewed studies under research conditions; real-world clinical performance across heterogeneous patient populations is typically lower and should be evaluated against each specific deployment’s validation data.

Cardiac Screening: From Annual Checkups to 15-Second AI Assessment

One of the most practically significant 2025 developments in AI-assisted early detection involves a stethoscope-like device that uses AI to screen for cardiac conditions at primary care level. The device transmits recordings to a secure cloud platform where AI evaluates cardiac patterns too subtle for clinicians to hear. It can screen for three major conditions — heart failure, atrial fibrillation, and valve disease — in about 15 seconds. In trials across 200 primary care practices covering more than a million patients, clinicians were two to three times more likely to catch these conditions early. Because heart failure is often diagnosed only after emergency hospitalization, earlier detection could reduce both mortality and healthcare spending.

The same source notes that the device is not intended for general population screening — false positives remain a concern — but represents a meaningful advance for patients with symptoms or risk factors who would previously have waited for specialist referral.

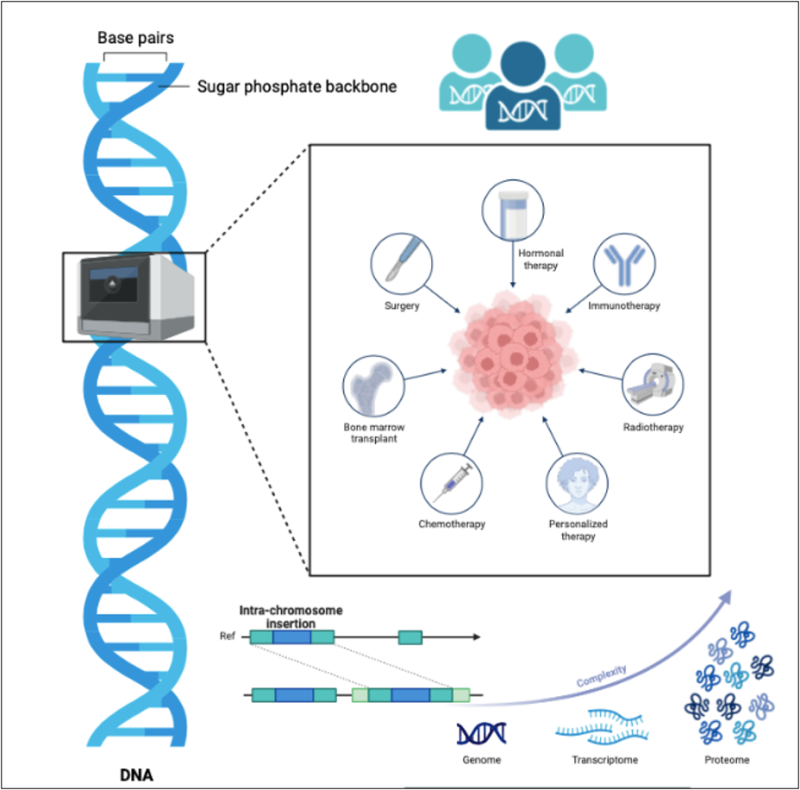

Genomics: Identifying Disease-Causing Variants With Unprecedented Precision

A research team from Harvard Medical School and the Centre for Genomic Regulation introduced PopEVE, an AI system published in Nature Genetics that evaluates whether a genetic variant is benign, disease-causing, or linked to early versus later-life mortality. PopEVE fuses evolutionary data spanning hundreds of thousands of species with large-scale human datasets including the UK Biobank and gnomAD. In nearly all cases where a causal mutation was already known, PopEVE ranked that mutation as the most damaging in the genome. It also reduced false positives when compared with other leading models including AlphaMissense.

Rare genetic diseases often leave families searching for a diagnosis for years, with many patients never receiving a definitive answer. Models like PopEVE could substantially shorten that diagnostic journey — not by replacing clinical genetics expertise but by dramatically narrowing the field of variants requiring human review.

Predictive Analytics: From Reactive to Proactive Healthcare

Johns Hopkins Hospital and Microsoft Azure AI collaborated on implementing AI-driven predictive analytics, leveraging electronic health records, medical imaging, and genomic information. Their AI algorithms were trained to predict patient outcomes including disease progression, readmission risks, and response to treatments. The implementation significantly improved patient care by enabling healthcare providers to intervene early, prevent complications, and tailor treatments based on individual patient profiles.

Predictive modeling using AI identifies patterns and trends that may not be apparent through traditional statistical methods. By leveraging AI’s predictive capabilities, the healthcare sector can shift from a predominantly reactive model to a more proactive and preventative one. AI-driven predictive modeling opens the door to personalized medicine by using machine learning algorithms that analyze genetic, epigenetic, and proteomic data to devise treatment plans for individual patients.

The Bias and Accountability Problems: Non-Negotiable Caveats

The transformative potential of AI in healthcare cannot be discussed honestly without addressing its most serious unresolved challenges. AI systems can inadvertently amplify existing healthcare inequities. Bias emerges through data bias from training data and algorithmic bias from model design. Minority bias occurs when protected groups have insufficient representation in datasets, leading to decreased performance when algorithms analyze these populations. Cardiovascular risk prediction algorithms trained predominantly on male patient data often provide inaccurate assessments for female patients with different symptom presentations. Algorithms showing bias against certain demographics could violate principles of bioethics: justice, autonomy, beneficence, and non-maleficence. (According to a report by: Press Information Bureau )

As AI tools take on more decision-support or semi-autonomous roles, the question of accountability becomes pressing: who is responsible if an AI-enabled recommendation leads to harm — the clinician, the hospital, or the developer? Patient trust often lags behind physician enthusiasm. Clear explanations from clinicians, transparency, strong data governance, and solid evidence of performance remain critical for building confidence.

These are not peripheral concerns. A diagnostic AI that performs with 94% accuracy in the research population used to train it may perform significantly worse in a rural Indian hospital with different patient demographics, imaging equipment, and data quality. Deployment context matters as much as benchmark performance — a distinction that regulators, clinicians, and hospital administrators need to maintain rigorously as adoption accelerates.