Quantum computing has spent decades as one of the most consequential technologies under development and one of the most persistently misunderstood. In 2025 and early 2026, the field crossed a series of milestones that moved it measurably closer to practical utility — without yet delivering the transformative applications that both researchers and company roadmaps have long promised. Understanding what quantum computers actually are, what they can and cannot do today, and what the verified progress means for the coming decade requires setting aside both hype and excessive skepticism in favor of a more careful reading of what the evidence shows.

The Basics: What Makes a Quantum Computer Different

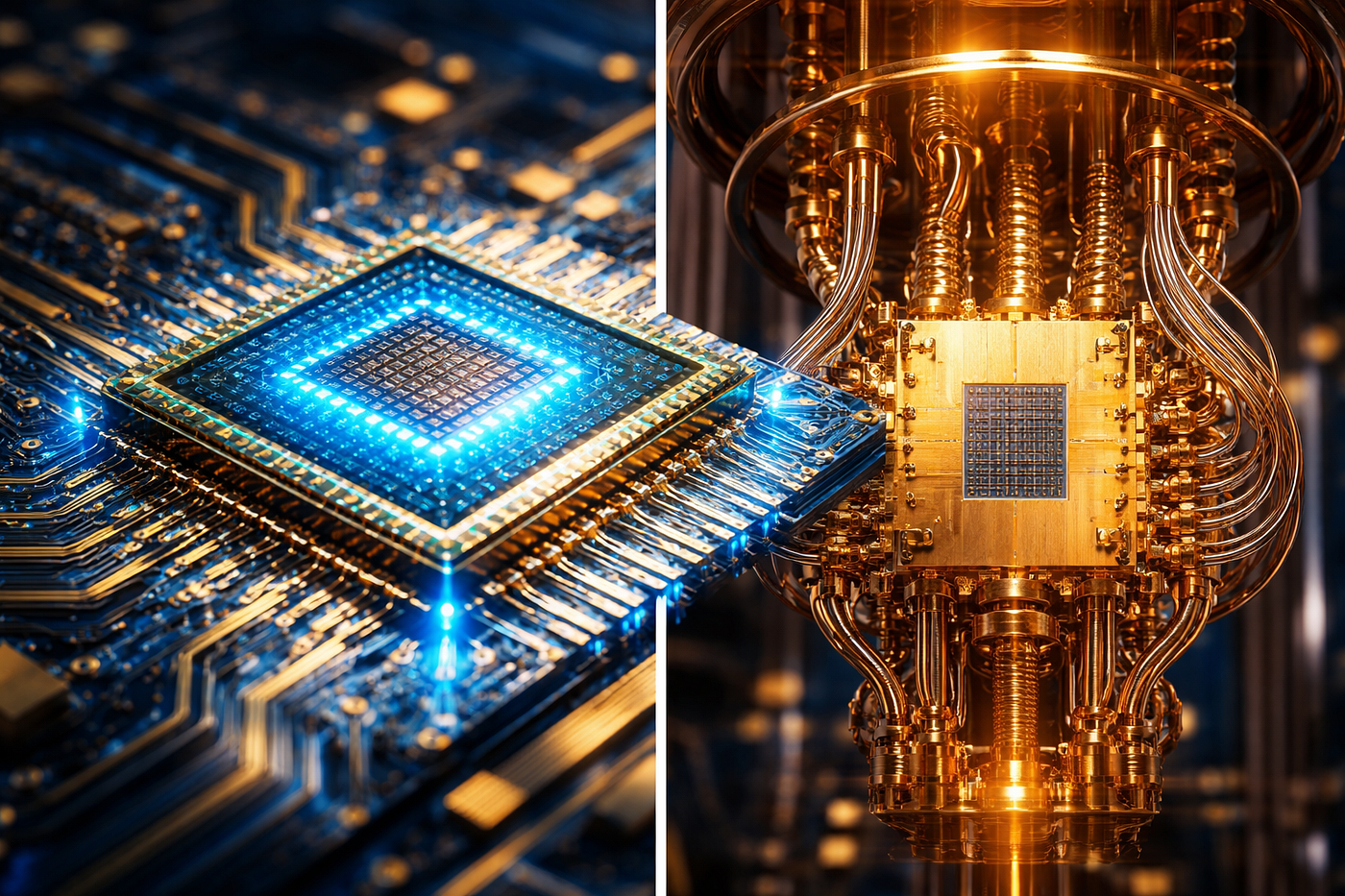

Classical computers — the devices running every smartphone, server, and laptop in existence — process information as bits, each of which exists in one of two states: 0 or 1. Quantum computers replace bits with qubits, which exploit two principles of quantum mechanics: superposition and entanglement. Superposition allows a qubit to exist in a combination of both 0 and 1 simultaneously, rather than being forced into one state or the other. Entanglement links two or more qubits so that the state of one is immediately correlated with the state of others, regardless of physical distance. These properties allow quantum computers to explore vast numbers of possible solutions to certain types of problems simultaneously, rather than evaluating them sequentially the way a classical machine does.

This does not mean quantum computers are universally faster than classical computers. They are not. For most everyday tasks — browsing the internet, running a spreadsheet, generating a document — a quantum computer offers no advantage and would currently perform worse than the laptop in front of you. Quantum advantage applies specifically to problems with a mathematical structure that can exploit parallelism at the quantum level: optimization problems, simulation of molecular systems, certain cryptographic algorithms, and large-scale machine learning tasks. The goal of the field is to build machines that can solve such problems better than any classical computer — a threshold researchers call quantum advantage.

The 2025 Milestones: What Has Actually Been Demonstrated

Google’s Willow quantum chip, featuring 105 superconducting qubits, achieved a critical milestone in late 2024 by demonstrating exponential error reduction as qubit counts increased — a phenomenon known as going “below threshold.” The Willow chip completed a benchmark calculation in approximately five minutes that would require a classical supercomputer 10^25 years to perform.

This result generated significant attention because it provided strong evidence that scaling quantum computers up does not necessarily mean scaling errors up — a fundamental barrier that has limited the field for years. However, researchers from Nature and other independent bodies noted important qualifications: logical error rates remain orders of magnitude higher than needed for practical algorithms, the demonstrations have been limited to quantum memory preservation rather than full gate operations, and vastly larger qubit arrays will be required for real-world applications.

IBM, which operates the most extensive commercial quantum computing program in the world, made a more directly commercial announcement at its Quantum Developer Conference in November 2025. IBM unveiled its IBM Quantum Nighthawk processor as its most advanced yet, and announced a path to delivering quantum advantage by the end of 2026 — the point at which a quantum computer can solve a problem better than all classical-only methods. The company also announced the IBM Quantum Loon experimental processor, demonstrating all the key components needed for fault-tolerant quantum computing.

IBM expects future iterations of the Nighthawk processor to deliver up to 7,500 two-qubit gates by the end of 2026 and up to 10,000 gates by 2027. The company targets a fully fault-tolerant quantum computer, called IBM Quantum Starling, by 2029, comprising approximately 200 logical qubits supported by around 10,000 physical qubits and capable of running circuits with 100 million error-corrected operations.

Microsoft took a structurally different approach to the same challenge. In February 2025, the company unveiled Majorana 1 — a quantum processor based on topological qubits rather than the superconducting qubits used by IBM and Google. Topological qubits are theoretically more stable and less error-prone by design, addressing the decoherence problem differently than the error correction approach pursued by competitors. The practical performance advantages of topological qubits over superconducting qubits at scale remain to be demonstrated.

The Error Correction Problem: The Central Challenge

In 2025, quantum error correction emerged as the universal priority to achieve utility-scale quantum computing, with industry experts recognizing it as a crucial competitive differentiator. Financially, quantum companies attracted substantial funding, leading to multi-billion-dollar valuations for both public companies like IonQ, Rigetti, and D-Wave, and private firms including Quantinuum valued at approximately $10 billion and PsiQuantum at $7 billion.

The problem quantum error correction addresses is fundamental: qubits are extraordinarily fragile. Environmental interference — heat, vibration, electromagnetic noise — causes them to lose their quantum state, producing errors. A classical computer’s error rate is so small as to be practically nonexistent for most applications. Current quantum computers have error rates that make running long, complex computations unreliable without active correction mechanisms. Building those mechanisms — which themselves require additional qubits to implement — is the central engineering challenge between the field’s current state and widespread commercial utility.

IBM expects the first verified quantum advantage cases by end of 2026, while multiple companies target fault-tolerant systems by 2029, with utility-scale commercial applications expected in the early 2030s. These are company projections, not independent verifications, and the history of quantum computing is marked by timelines that have extended further than initial projections suggested.

What Quantum Computing Will Eventually Change

The applications receiving the most serious research investment are those where the problems’ mathematical structure maps well onto quantum algorithms. Drug discovery and materials science are the most frequently cited near-term domains: quantum computers can simulate molecular interactions with an accuracy and speed that classical systems cannot match, potentially accelerating pharmaceutical development significantly. JPMorgan Chase has partnered with IBM to explore quantum algorithms for option pricing and risk analysis, with early studies indicating quantum models could outperform classical Monte Carlo simulations in both speed and scalability.

Cryptography represents perhaps the most concerning long-term implication. A fault-tolerant quantum computer with sufficient scale could break the RSA and elliptic curve encryption algorithms that currently protect the majority of internet communications and digital financial transactions. Research published in 2025 suggested that breaking RSA encryption may require only one million qubits, down from earlier estimates of 20 million. The US National Institute of Standards and Technology finalized three post-quantum cryptography standards in August 2024, which organizations are now advised to begin implementing. The practical threat to existing encryption is not imminent — the hardware capable of breaking RSA at scale does not yet exist — but the timeline is shortening.

Quantum computing is no longer purely theoretical, nor is it yet commercially transformative. It is in the critical transition phase between laboratory demonstration and practical deployment — a phase that will likely define the next five to seven years of the field’s development.